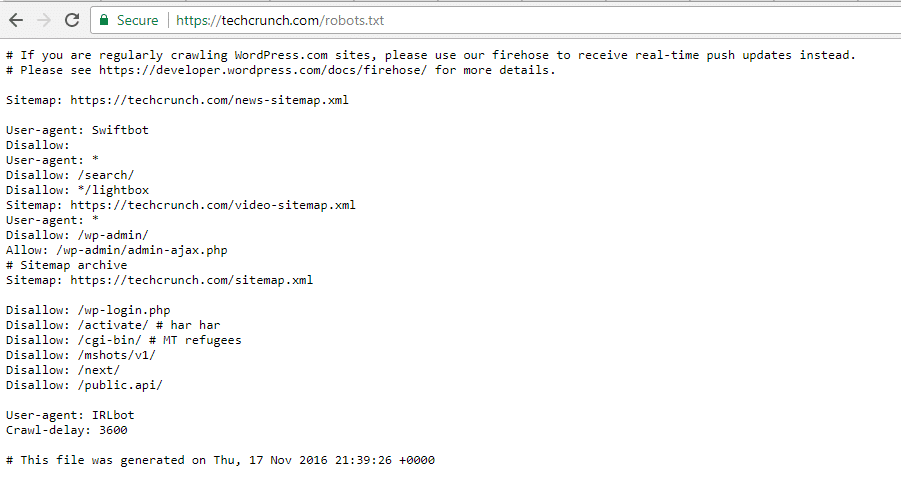

But these entries are only directives, not mandates. This file tells bots which URL paths they have permission to visit.

Read the case study What is a Robots.txt Fileīefore a search engine spiders any page, it will check the robots.txt.

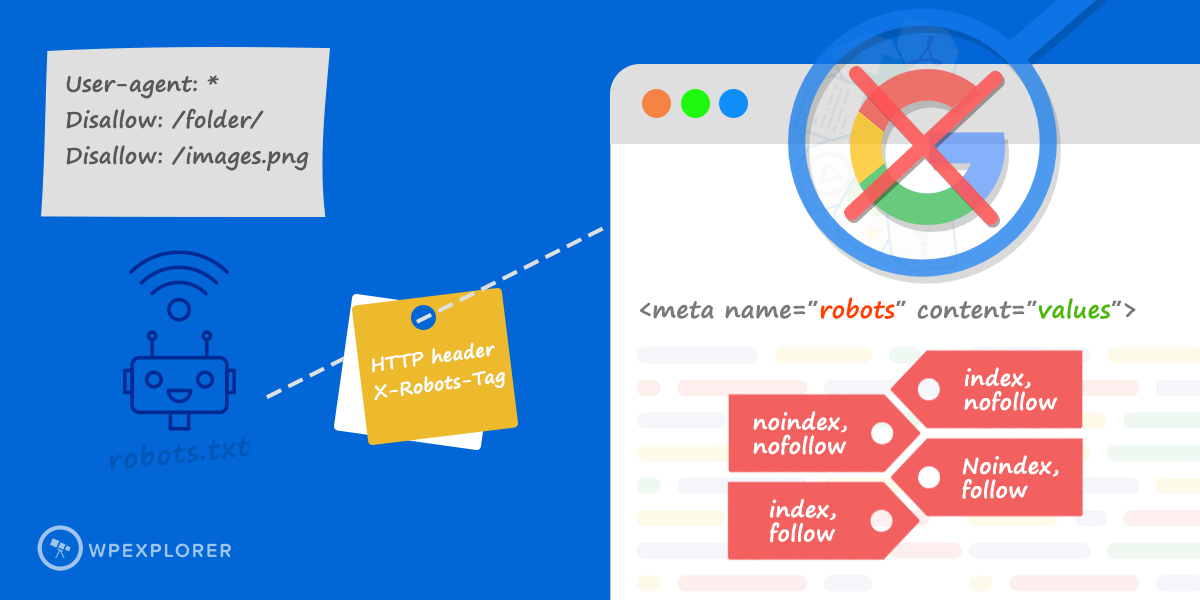

And more importantly, when and how to best leverage it for modern SEO. That being said, it’s beneficial to understand what can be done with robots.txt files and robots tags to guide crawling, indexing and the passing of link equity. Google themselves note that “a solid information architecture is likely to be a far more productive use of resources than focusing on crawl prioritization”. Improving your website’s architecture to make URLs useful and accessible for search engines is the best strategy. In general, SEO’s should aim to minimise crawl restrictions on robots. For any robots exclusions, investigate them to understand if they’re strategic from an SEO perspective. If you see errors such as “Submitted URL marked ‘noindex’” or “Submitted URL blocked by robots.txt”, work with your developer to fix them. There are many ways to optimise crawl budget, but an easy place to start is to check in the GSC “Coverage” report to understand Google’s current crawling and indexing behaviour. Want to go deeper? Get a more detailed breakdown of Googlebot’s activity, such as which pages are being visited, as well as stats for other crawlers, by analysing your site’s server log files. This will give you an expected crawl ratio for you to begin optimising against. To make the number more actionable, divide the average pages crawled per day by the total crawlable pages on your site – you can ask your developer for the number or run an unlimited site crawler. Thus, this tool gives you an idea of your global crawl budget but not its exact repartition. It also gathers AdWords or AdSense bots which are SEA bots. Note that the GSC is an aggregate of 12 bots which are not all dedicated to SEO. This is known as “ crawl budget”.įind your site’s crawl budget in the Google Search Console (GSC) “Crawl Stats” report. To ensure the impact is always positive for your site, today we are going to delve into:īest Practice Robots Checklist Optimising Crawl BudgetĪ search engine spider has an “allowance” for how many pages it can and wants to crawl on your site. One small change to a robots.txt file or robots tags can have dramatic impact on your website. Especially as the best practices have significantly altered over recent years. This tool does not make changes to the actual file on your site, it only tests against the copy hosted in the tool.Optimising for crawl budget and blocking bots from indexing pages are concepts many SEOs are familiar.

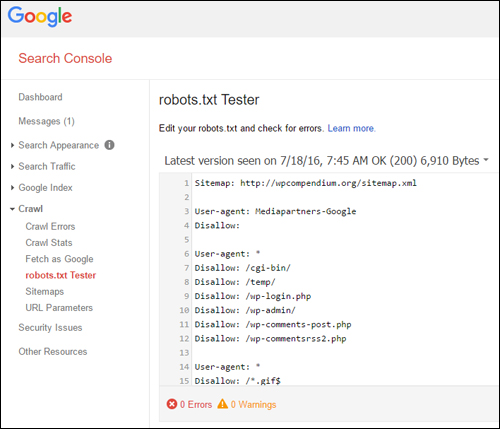

Copy your changes to your robots.txt file on your site.Note that changes made in the page are not saved to your site! See the next step. Edit the file on the page and retest as necessary.Check to see if TEST button now reads ACCEPTED or BLOCKED to find out if the URL you entered is blocked from Google web crawlers.Select the user-agent you want to simulate in the dropdown list to the right of the text box.Type in the URL of a page on your site in the text box at the bottom of the page.

Open the tester tool for your site, and scroll through the robots.txt code to locate the highlighted syntax warnings and logic errors. The number of syntax warnings and logic errors is shown immediately below the editor.The tool operates as Googlebot would to check your robots.txt file and verifies that your URL has been blocked properly. You can submit a URL to the robots.txt Tester tool. For example, you can use this tool to test whether the Googlebot-Image crawler can crawl the URL of an image you wish to block from Google Image Search. The robots.txt Tester tool shows you whether your robots.txt file blocks Google web crawlers from specific URLs on your site.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed